AI-Driven Legacy Modernization: Integrating Machine Intelligence into ERP, SCADA, and On-Premise Systems

Integrating AI into legacy systems is one of the most critical — and most underestimated — engineering challenges in enterprise digital transformation. Most AI initiatives don’t fail because of the model. They fail because the data lives in a 15-year-old SAP instance, a SCADA historian with a proprietary protocol, or an on-premise Oracle database that no one wants to touch.

The AI layer is the easy part. Getting clean, consistent, real-time data out of entrenched legacy systems — and closing the loop back into operational workflows — is where projects stall.

This guide covers the technical patterns, integration strategies, and architectural decisions that engineering teams need to understand before selecting a vendor for AI modernization projects involving legacy infrastructure.

Table of Contents

- Why Legacy AI Integration Fails

- Integration Patterns by System Type

- Vertical Integration: Connecting AI Across Systems

- Architectural Principles

- Vendor Evaluation Criteria

Why Legacy AI Integration Fails

Legacy systems were designed for transactional integrity, not analytical accessibility. ERP systems optimize for record-keeping. SCADA systems optimize for real-time control. On-premise databases optimize for ACID compliance. None of them were designed to serve feature vectors to a machine learning model at inference time.

The gap between where your data lives and where your AI needs it creates three distinct integration challenges:

- Extraction: Getting data out of systems that weren’t designed to be queried at scale

- Normalization: Resolving schema inconsistencies, unit mismatches, and temporal misalignment across systems

- Actuation: Feeding model outputs back into legacy workflows without breaking existing processes

A vendor that can only address one or two of these three is not delivering a complete solution — they’re delivering a prototype that your team will struggle to operationalize.

The Legacy AI Integration Stack

flowchart TB

subgraph AI["AI / ML Layer"]

A1[Model Training]

A2[Inference Engine]

A3[Monitoring & Drift Detection]

end

subgraph INT["Integration Layer"]

I1[Feature Store]

I2[API Gateway]

I3[CDC / Stream Processor]

end

subgraph SRC["Legacy Systems"]

S1["ERP\n(SAP / Oracle)"]

S2["SCADA / IoT\n(OPC-UA / MQTT)"]

S3["On-Premise DB\n& APIs"]

end

S1 -->|OData / BAPI / CDC| I2

S2 -->|OPC-UA / REST| I3

S3 -->|ETL / CDC| I3

I2 --> I1

I3 --> I1

I1 --> A1

I1 --> A2

A2 -->|Predictions| I2

I2 -->|Write-back| S1

A3 -.->|Alerts| I2Key insight: The integration layer is the critical middle tier. Most AI project failures occur here — not in the model.

Integration Patterns by System Type

ERP Systems: AI Integration with SAP and Oracle

ERP systems — SAP, Oracle EBS, Oracle Fusion — are the backbone of procurement, finance, manufacturing, and supply chain operations. They hold decades of transactional history that is extremely valuable for demand forecasting, anomaly detection, and process optimization AI use cases.

The integration challenge: SAP and Oracle expose data through a mix of BAPIs, IDocs, OData APIs, and database views. Direct database access is strongly discouraged and risks breaking vendor support agreements.

Real-time AI integration with SAP and Oracle ERP requires one of these approaches:

- SAP Integration Suite / Oracle Integration Cloud — native middleware providing event-driven data feeds without direct DB access

- Change Data Capture (CDC) via Debezium, Oracle GoldenGate, or SAP Landscape Transformation — streams transactional changes to a downstream data platform

- RFC/BAPI polling — viable for lower-frequency batch use cases but introduces latency and ERP performance overhead

| Method | Latency | ERP Load | Risk Level | Best For |

|---|---|---|---|---|

| SAP Integration Suite / OIC | Low–Medium | Low | Low | Event-driven real-time feeds |

| CDC (Debezium, GoldenGate) | Near-real-time | Very Low | Medium | High-volume streaming to data platform |

| RFC/BAPI Polling | Medium–High | Medium | Low | Batch use cases, lower frequency |

| Direct DB Access | Low | High | High | ⚠ Avoid — breaks vendor support |

What to ask a vendor: Can they operate without direct database credentials? Do they have certified SAP or Oracle connector experience? Can they handle IDoc parsing and BAPI schema evolution as ERP versions are patched?

AI use cases for ERP systems:

- Demand forecasting using historical sales orders and inventory movements

- Supplier risk scoring using procurement and delivery performance data

- Predictive maintenance scheduling using work order and asset history

- Invoice anomaly detection using AP transaction patterns

Closing the loop: AI outputs must land back in the ERP as actionable records — purchase order suggestions, maintenance work orders, flagged transactions — via sanctioned APIs (SAP BAPI calls, Oracle REST APIs), not direct DB writes. Vendors who skip this step are delivering dashboards, not operational AI.

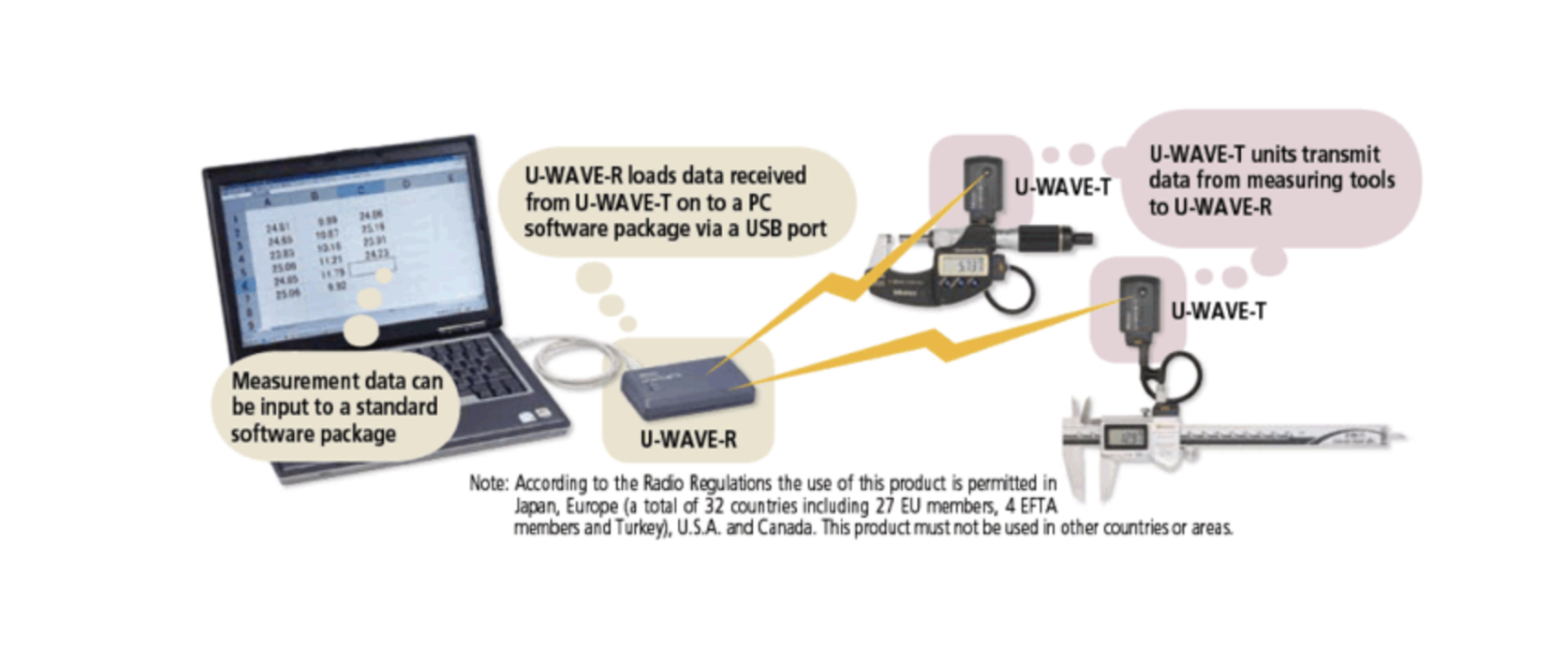

SCADA and Industrial IoT: Machine Learning for OT Environments

SCADA AI integration presents a fundamentally different profile to ERP. Data volumes are high (sensor polling at 1–100Hz is common), latency requirements are strict, and the consequences of integration errors can be physical — equipment damage, safety incidents, regulatory violations.

The integration challenge: SCADA historians (OSIsoft PI, Wonderware, Ignition) use proprietary time-series storage formats and query languages. OPC-UA and OPC-DA are the dominant industrial protocols, but OPC-DA is Windows-only, COM/DCOM-based, and notoriously difficult to bridge to containerized environments.

Modern SCADA machine learning integration approaches:

- OPC-UA to MQTT bridging: Convert OPC-UA tag subscriptions to MQTT topics, then ingest via a broker (Mosquitto, EMQX, AWS IoT Core) into a stream processing layer

- Historian REST APIs: OSIsoft PI Web API and Ignition’s built-in REST interface provide time-series queries without proprietary SDK dependencies

- Edge computing layer: Deploy lightweight inference containers (ONNX Runtime, TensorFlow Lite) on industrial edge hardware to perform inference close to the source

Network segmentation is a hard constraint: Most industrial environments enforce strict IT/OT network separation (Purdue Model, ISA-95). A vendor proposing to pull raw SCADA data directly to a cloud ML platform without addressing the DMZ is not production-ready.

Purdue Model: Where AI Fits in OT Network Architecture

flowchart TB

subgraph L4["Level 4 — Enterprise Network"]

ERP["ERP / IT Systems"]

AI["AI Platform\n(Training + Monitoring)"]

end

subgraph DMZ["DMZ / Firewall"]

GW["Data Diode / Secure Gateway"]

end

subgraph L3["Level 3 — Operations Network"]

HIST["Historian\n(OSIsoft PI / Ignition)"]

EDGE["Edge Inference Node\n(ONNX / TFLite)"]

end

subgraph L2["Level 2 — SCADA / HMI"]

SCADA["SCADA / HMI"]

end

subgraph L1["Level 1–0 — Field"]

PLC["PLC / DCS / Sensors\n(1–100Hz polling)"]

end

PLC -->|OPC-UA| SCADA

SCADA -->|Tag subscriptions| HIST

HIST -->|REST API| GW

GW -->|Normalized time-series| AI

HIST --> EDGE

EDGE -->|Inference results| GW

GW -->|Work orders / alerts| ERPAI inference should run at Level 3 or at the edge (Level 2–3 boundary). Never pull raw sensor data across the DMZ to cloud for real-time inference.

AI use cases for SCADA and Industrial IoT:

| Use Case | Input Data | Model Type | Output |

|---|---|---|---|

| Predictive Maintenance | Vibration, temp, pressure | Time-series anomaly detection | Failure probability + ETA |

| Process Optimization | Multivariate sensor streams | Regression / RL | Setpoint recommendations |

| Quality Control | Inline sensor + production params | Classification | Pass/fail + root cause |

| Anomaly Detection | Multivariate sensor baseline | Autoencoder / isolation forest | Deviation score + alert |

Key vendor evaluation question: Have they deployed in OT environments before? Do they understand the difference between historian time-series and event-driven SCADA alarms? Can they work within air-gapped or DMZ-constrained network topologies?

On-Premise Databases and Internal APIs: AI Integration Without Cloud Egress

This category covers the long tail of legacy infrastructure: PostgreSQL and SQL Server instances running core business logic, REST/SOAP APIs wrapped around decade-old monolithic applications, and file-based data exports (CSV, XML, EDI) from systems too old to expose APIs.

The integration challenge: These systems are heterogeneous, poorly documented, and often maintained by engineers who hold institutional knowledge that isn’t written down anywhere. Schema changes are infrequent but impactful. API contracts are informal and version-inconsistent.

Practical on-premise AI integration approaches:

- Semantic layer / data virtualization: Tools like dbt, Trino, or Databricks Unity Catalog create a unified query interface over disparate on-premise sources without physically moving data

- API gateway abstraction: Wrapping legacy SOAP services or undocumented REST endpoints behind a governed API gateway (Kong, Azure APIM) creates a stable integration surface even as underlying systems evolve

- ETL to feature store: Scheduled ETL pipelines (Airflow, Prefect) can extract, normalize, and load features into a feature store (Feast, Tecton, Hopsworks) that the ML model consumes without touching production databases at inference time

What to watch for: Vendors who propose reading directly from production OLTP databases for real-time inference introduce query load that degrades application performance. Insist on read replicas, materialized views, or caching layers between production systems and AI inference paths.

Vertical Integration: Connecting AI Across Legacy System Boundaries

AI-driven vertical integration becomes genuinely powerful when AI connects data flows that were previously siloed — building intelligence that spans ERP, SCADA, and operational databases simultaneously.

Case Example: Predictive Maintenance with Automated Procurement

A manufacturer running SAP for procurement and OSIsoft PI for equipment monitoring has two data sources that have never communicated. A vertically integrated AI layer connects them in a closed loop:

sequenceDiagram

participant PI as OSIsoft PI Historian

participant EDGE as Edge Inference Node

participant AI as AI / ML Platform

participant SAP as SAP ERP

participant ENG as Engineering Team

PI->>EDGE: Vibration + temp streams (OPC-UA)

EDGE->>EDGE: Run anomaly detection model

EDGE->>AI: Failure probability score + sensor snapshot

AI->>SAP: Query spare parts inventory & lead times (OData)

SAP-->>AI: Stock levels + supplier lead times

AI->>AI: Calculate procurement urgency score

alt Lead time > predicted failure window

AI->>SAP: Create purchase requisition (BAPI)

AI->>ENG: Maintenance recommendation alert

else Within safe window

AI->>ENG: Advisory notification only

endThis architecture closes operational loops across system boundaries that previously required manual handoffs between maintenance, procurement, and operations teams — eliminating days of latency from a process that previously depended on human coordination.

This requires a vendor who can do systems integration, not just ML. Many AI vendors are strong on modeling but treat integration as someone else’s problem. For legacy modernization projects, integration capability is at least as important as modeling capability.

Architectural Principles for Legacy AI Integration

Regardless of vendor, insist on these five principles when evaluating any legacy AI integration architecture:

1. Non-invasive extraction: AI pipelines must not modify legacy system configurations, schemas, or performance. Use CDC, historian APIs, and read replicas — never direct writes to production systems.

2. Decoupled inference: The AI model must not sit on the critical path of legacy system operations. If the ML service goes down, ERP transactions and SCADA control loops must continue unaffected.

3. Bidirectional audit trail: Every AI-generated action touching a legacy system — work order creation, purchase requisition, alert generation — must be traceable back to the model version, input data, and timestamp that produced it. This is both an operational and compliance requirement in regulated industries.

4. Schema evolution tolerance: Legacy systems change. AI pipelines must handle schema drift gracefully — failing loudly on unexpected changes rather than silently producing incorrect features.

5. Incremental deployment: Full cutover is high risk. Require a shadow mode deployment phase where AI recommendations run in parallel with existing manual processes before any automation is activated.

How to Evaluate AI Vendors for Legacy System Integration

When assessing vendors for legacy AI modernization, go beyond model benchmarks. The differentiating questions are in the integration layer:

| Evaluation Dimension | What to Ask | Red Flag |

|---|---|---|

| Connector depth | Do they have production connectors for your ERP version / historian? | "We support SAP" with no reference deployments |

| OT/IT boundary experience | Have they worked within Purdue Model network topologies? | Proposing direct cloud egress from OT network |

| Write-back capability | Can they demo closed-loop actuation into your legacy system? | Delivering dashboards only, no write-back |

| Data residency | Can the pipeline run fully on-premise or private cloud? | Requires cloud-only deployment |

| MLOps handoff | Who owns monitoring and retraining post-deployment? | Permanent vendor dependency with no handoff plan |

| IP & data ownership | Who owns model weights and training data after engagement? | Vague or absent IP clause in contract |

Frequently Asked Questions

Can AI be integrated with legacy ERP systems without replacing them?

Yes. Non-invasive integration patterns — CDC, OData APIs, BAPI connectors — allow AI pipelines to extract data from and write results back to ERP systems like SAP and Oracle without modifying core system configurations or requiring system replacement.

What is the safest way to integrate AI with SCADA systems?

Deploy inference at the edge (Level 2–3 of the Purdue Model) and use a secure DMZ gateway for data transfer between OT and IT networks. Never pull raw sensor data directly to a cloud ML platform across OT/IT network boundaries.

How long does a legacy AI integration project typically take?

Timelines vary significantly by system complexity and data maturity. A focused proof-of-concept for a single system (e.g., ERP demand forecasting) can take 8–12 weeks. Full vertical integration spanning ERP, SCADA, and on-premise databases typically requires 6–18 months for production deployment.

What data governance controls are needed for on-premise AI deployments?

At minimum: data lineage tracking, role-based access controls on feature stores and model endpoints, model versioning with rollback capability, and audit logging for all AI-generated write-backs to production systems.

Conclusion

Legacy systems aren’t going away — and the most valuable enterprise AI opportunities sit precisely at the intersection of old infrastructure and new intelligence. The engineering challenge isn’t building the model. It’s building the integration layer that makes the model operational within the constraints of systems designed decades before machine learning was a practical consideration.

Define your integration surface clearly, pressure-test vendor claims against your specific system versions and network topology, and prioritize closed-loop architectures over dashboards. The difference between an AI proof-of-concept and a production system that delivers measurable value is almost always in the plumbing.

Looking to evaluate vendors for your legacy AI integration project? Use the vendor scorecard table above as a starting point for your RFP process.

Get in Touch with us

Related Posts

- 中国品牌出海东南亚:支付、物流与ERP全链路集成技术方案

- 再生资源工厂管理系统:中国回收企业如何在不知不觉中蒙受损失

- 如何将电商平台与ERP系统打通:实战指南(2026年版)

- AI 编程助手到底在用哪些工具?(Claude Code、Codex CLI、Aider 深度解析)

- 使用 Wazuh + 开源工具构建轻量级 SOC:实战指南(2026年版)

- 能源管理软件的ROI:企业电费真的能降低15–40%吗?

- The ROI of Smart Energy: How Software Is Cutting Costs for Forward-Thinking Businesses

- How to Build a Lightweight SOC Using Wazuh + Open Source

- How to Connect Your Ecommerce Store to Your ERP: A Practical Guide (2026)

- What Tools Do AI Coding Assistants Actually Use? (Claude Code, Codex CLI, Aider)

- How to Improve Fuel Economy: The Physics of High Load, Low RPM Driving

- 泰国榴莲仓储管理系统 — 批次追溯、冷链监控、GMP合规、ERP对接一体化

- Durian & Fruit Depot Management Software — WMS, ERP Integration & Export Automation

- 现代榴莲集散中心:告别手写账本,用系统掌控你的生意

- The Modern Durian Depot: Stop Counting Stock on Paper. Start Running a Real Business.

- AI System Reverse Engineering:用 AI 理解企业遗留软件系统(架构、代码与数据)

- AI System Reverse Engineering: How AI Can Understand Legacy Software Systems (Architecture, Code, and Data)

- 人类的优势:AI无法替代的软件开发服务

- The Human Edge: Software Dev Services AI Cannot Replace

- From Zero to OCPP: Launching a White-Label EV Charging Platform